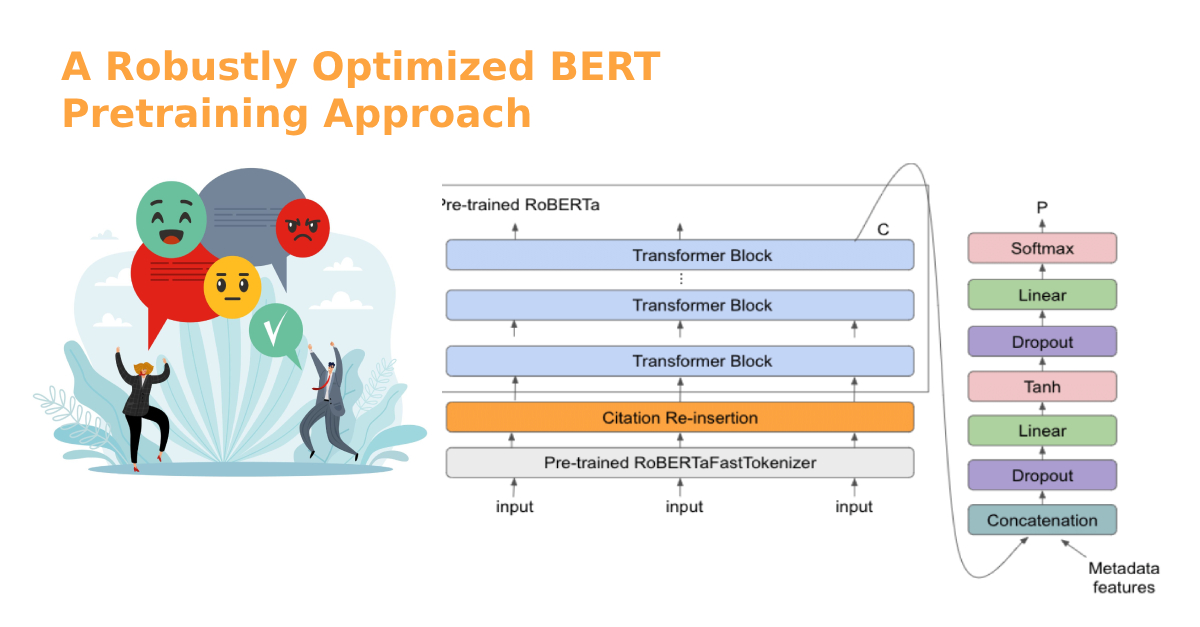

ROBERTa stands for “A Robustly Optimized BERT Pretraining Approach.” This is a model created by Facebook’s AI team, and it’s essentially an optimization of BERT […]

Tag: AI

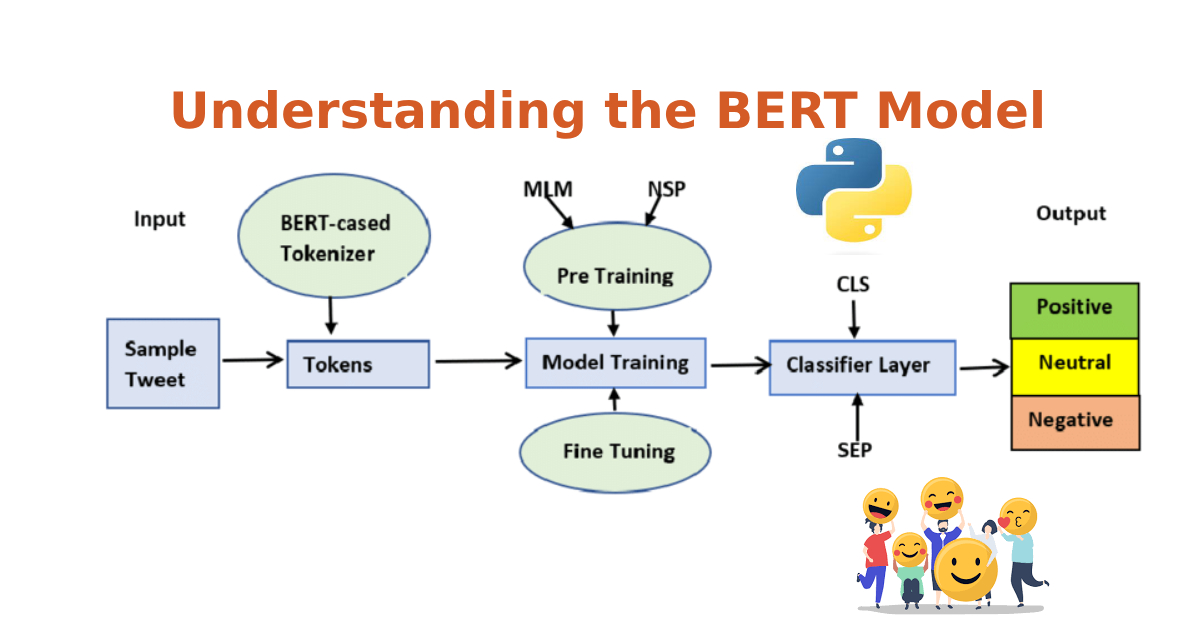

Understanding the BERT Model

BERT, which stands for Bidirectional Encoder Representations from Transformers, has revolutionized the world of natural language processing (NLP). Developed by researchers at Google in 2018, […]

Paving the Path to Tomorrow’s Innovations

The world of technology is ever-evolving, with new inventions reshaping our reality at a pace previously unimagined. Machine Learning (ML), a subset of artificial intelligence […]